SurveyMonkey

統計的有意性

統計的有意性

回答者グループによってアンケートの回答に統計的な有意差が生じているのかどうかを確認できます。 SurveyMonkey の統計的有意性機能の利用手順は以下のとおりです。

- アンケートに比較ルールが適用されている状態で統計的有意性をオンにします。比較したいグループを選択すると、そのグループを基準にアンケート結果が細分化され横並びで表示されます。

- 表示された表を見ると、回答グループによってアンケートの回答に統計的な有意差が生じているのかどうかがわかります。

統計的有意性の表示

統計的有意性を確認できるアンケートの実施方法を以下にステップごとに紹介します。

- ステップ1:アンケートに選択回答形式の質問を追加する

- ステップ2:回答を収集する

- ステップ3:比較ルールを適用する

- ステップ4:表の確認

- ステップ5:結果を共有する

アンケート例

ある製品に対し、女性よりも男性の方が著しく高い満足度を得られているか知りたいとします。

- アンケートに以下の2つの複数選択式の質問を作成します。

・あなたの性別を選択してください。(男性、女性)

・当社の製品に対する満足度を選択してください。(満足、不満) - 男性を選択した回答者の数と女性を選択した回答者の数がそれぞれ 30 以上になるよう、回答を収集します。

- 性別を問う質問の方に「比較ルール」を適用し、回答選択肢は男性と女性の両方を選択します。

- 男女間で満足度に著しい違いが出ているかどうかは、製品の満足度を問う質問のグラフの下の表で確認できます。

統計的な有意差とは?

統計的な有意差とは、あるグループの選択と他のグループの選択に大幅な違いがあるかどうかを、統計的な検定によって示すものです。 統計的有意性とは、その数値間に明確な差があり、そのデータ分析の明確な裏づけになり得ることを意味します。 それでも、その結果に意味があるかどうかは熟考する必要があります。その結果をどのように解釈し、どのように次の行動につなげるかは分析者次第です。

たとえば、ある会社では男性顧客よりも女性顧客からのクレームが多いとします。 その差が何らかの対応をとるほどの差であるかどうかは、どのように判断したら良いでしょう? 有効な手段としては、アンケートを実施して、実際に男性顧客の方が製品への満足度が明らかに高いのかどうかを確認するという方法があります。 統計数式を使用する SurveyMonkey の統計的有意性機能を使用すれば、本当に男性顧客の方が製品満足度が著しく高いのかどうかも判断しやすくなります。 そうすることで、推測ではなくデータに基づいてアクションを起こすことができるようになります。

- 統計的な有意差の計算

- 統計的な有意差なし

- 回答数

統計的有意性の計算

SurveyMonkey では、信頼度 95% を用いて統計的有意性を計算しています。 信頼度 95% とは、回答選択肢に統計的有意性があると示されたとき、2 つのグループ間の差が偶然で生じた確率またはサンプリングのエラーで生じた確率が 5% 未満であることを示しており、「P 0.05」と表記されることがよくあります。

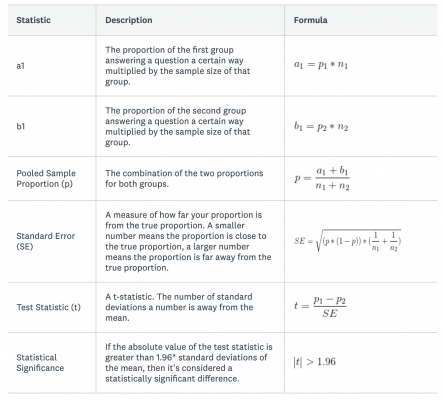

グループ間の統計的有意性は以下の数式を用いて計算しています。

*1.96 は信頼度 95% のときに使用される数値です。スチューデントの t-検定量関数では、その領域の 95% が平均値 ±1.96 標準偏差内に収まっているためです。

計算例

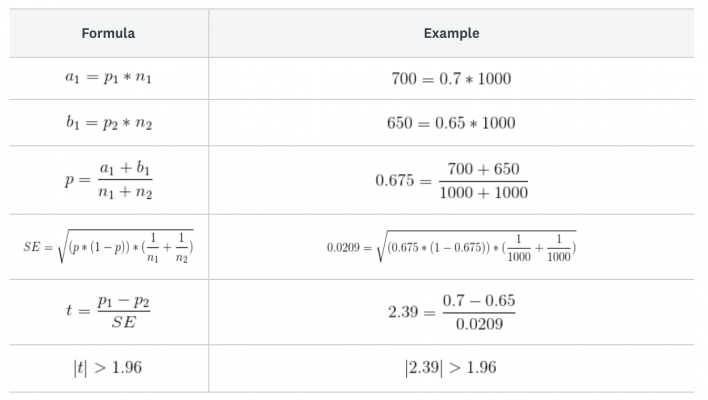

上の例をもとに、製品に満足していると回答した男性顧客の割合と女性顧客の割合との間に有意差があるかどうかを検証してみましょう。

男女 1,000 人ずつから回答を集め、製品に満足していると回答した割合が男性顧客では 70%、女性顧客では 65% だったとします。 この 70% という割合は、65% に比べて著しく高いといえるでしょうか?

以下のアンケートデータを使用して、計算してみましょう。

- p1 (製品に満足していると回答した男性顧客の割合) = 0.7

- p2(製品に満足していると回答した女性顧客の割合)= 0.65

- n1(男性回答者数)= 1000

- n2(女性回答者数)= 1000

検定統計量の絶対数が 1.96 より大きいため、男女間の回答には有意差があることが示されました。 男性顧客は女性顧客よりも製品満足度が高い傾向にあるということになります。

統計的有意性の非表示

すべての質問で統計的有意性を非表示にするには

- 左サイドバーの比較ルールの右にある下向き三角をクリックします。

- ルールの編集をクリックします。

- 統計的有意性の表示の隣にあるトグルをクリックすると、統計的有意性がオンになります。

- [適用]をクリックします。

特定の質問のみ、統計的有意性を非表示にするには

- グラフの上のカスタマイズをクリックします。

- 表示オプションタブをクリックします。

- 統計的有意性の隣にあるボックスのチェックを外します。

- [保存]をクリックします。

統計的有意性を表示しているときは、行列の入れ替えオプションが自動的にオンになっています。 従って、この表示オプションのチェックを外して統計的有意性をオフにするという方法もあります。